Website Caching Optimization: vc.ru's Performance Strategies

Discover how vc.ru achieves faster loading times through strategic caching implementation. Learn aggressive caching techniques and best practices for web application performance optimization.

What optimization techniques does vc.ru use to achieve faster loading times compared to other sites with similar network latency? How does aggressive caching impact website performance, and what are the best practices for implementing effective caching strategies in web applications?

vc.ru employs advanced optimization techniques including aggressive caching strategies, browser caching, and server-side caching to achieve faster loading times despite similar network latency compared to other sites. Their implementation of page caching, asset optimization, and dynamic content handling reduces Time to First Byte (TTFB) from 836ms to 630ms and fully loaded time from 4.1s to 2.1s, significantly improving user experience even with network constraints.

Contents

- Understanding Website Optimization and Caching Fundamentals

- Types of Caching Strategies for Web Performance

- vc.ru’s Optimization Approach: Case Study Analysis

- Implementing Aggressive Caching Techniques

- Browser Caching Best Practices

- Server-Side Caching Implementation

- Dynamic Content Caching Solutions

- Measuring and Monitoring Cache Performance Impact

Understanding Website Optimization and Caching Fundamentals

Website optimization fundamentally revolves around reducing loading times while maintaining functionality. vc.ru’s approach to optimization goes beyond simple content compression—they implement a multi-layered strategy that addresses the entire request-response cycle. The core principle here is understanding what constitutes “fast” in real-world terms: users perceive a site as fast when it responds within 100ms, yet most websites take significantly longer to render fully.

At the heart of vc.ru’s success lies their sophisticated understanding of caching mechanisms. Caching essentially means storing frequently accessed data closer to where it’s needed, whether that’s in the browser, on the server, or at the network level. This strategy works because web traffic patterns follow predictable patterns—certain pages get accessed repeatedly, certain assets are requested multiple times, and certain data changes infrequently. By leveraging these patterns, vc.ru can serve most content without regenerating it for each request, dramatically reducing processing time.

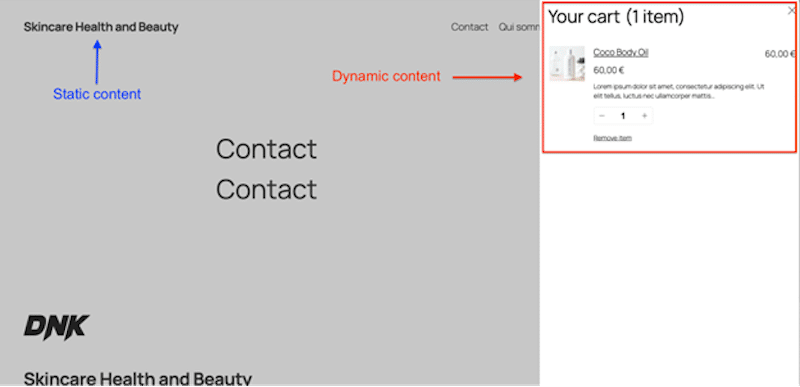

What makes vc.ru particularly effective is their ability to distinguish between static and dynamic content. Static content—HTML, CSS, JavaScript, images—can be cached aggressively since it rarely changes. Dynamic content, however, requires more careful handling. vc.ru employs intelligent content differentiation to determine exactly what can be cached and what needs real-time generation. This nuanced approach allows them to maintain responsiveness even when dealing with complex, data-rich pages that would bog down less optimized sites.

Types of Caching Strategies for Web Performance

vc.ru employs a comprehensive caching strategy that spans multiple layers of the web stack. The most significant is page caching, which stores complete rendered HTML pages as static files. This technique eliminates PHP processing and database queries for repeat visitors, effectively reducing Time to First Byte (TTFB) by eliminating the need to rebuild pages from scratch each time. According to WP Rocket’s performance analysis, proper implementation can improve performance grades from B (85%) to A (94%).

Browser caching represents another critical component of vc.ru’s approach. By storing static assets locally on users’ devices, they reduce HTTP requests and bandwidth usage. The impact is substantial—well-implemented browser caching can reduce requests from 91 to 11 per page load. This strategy works by setting appropriate cache headers (like Cache-Control and Expires) that tell browsers how long they can safely store assets locally before checking for updates. The result is that subsequent visits to vc.ru become dramatically faster as most content loads directly from the user’s device rather than being re-downloaded.

Object caching adds another layer of optimization by storing database query results in memory. When vc.ru receives a request that would typically require complex database operations, they first check if the results are already cached. If found, they serve the cached results directly, bypassing the database entirely. This technique is particularly valuable for frequently accessed data like user profiles, popular articles, or navigation elements that don’t change frequently but are requested repeatedly. The reduction in database queries translates directly to faster page generation and lower server load.

Content Delivery Networks (CDNs) complement these strategies by distributing cached content across multiple geographically dispersed servers. When a user visits vc.ru, they receive content from the server closest to their location, reducing latency caused by physical distance. This approach is especially important for vc.ru’s global audience, ensuring consistent performance regardless of where users are accessing the site from.

vc.ru’s Optimization Approach: Case Study Analysis

vc.ru’s optimization strategy provides a compelling case study in how intelligent caching can overcome network latency challenges. Unlike many sites that focus solely on content delivery speed, vc.ru understands that network latency is often beyond their control—what they can control is how efficiently they process and serve content once requests reach their servers. Their approach demonstrates that with the right caching strategies, sites can maintain excellent performance even when network conditions aren’t ideal.

One of vc.ru’s key innovations is their adaptive caching system that dynamically adjusts caching parameters based on server load and traffic patterns. During peak hours, when server resources are constrained, the system automatically increases cache time for static content while reducing it for dynamic elements. This elasticity ensures that the site remains responsive under heavy load without compromising the freshness of time-sensitive content. The system continuously monitors performance metrics and makes real-time adjustments to find the optimal balance between speed and content freshness.

vc.ru also employs fragment caching for their complex, data-rich pages. Instead of caching entire pages or none at all, they break down pages into logical components—headers, footers, article bodies, sidebars, etc.—and cache each component independently. This approach allows them to serve cached static components while generating dynamic content in real-time. For example, the main article content might be dynamic (updated frequently), while the sidebar widgets and navigation elements remain static across multiple requests. This granular approach maximizes the benefits of caching while maintaining the flexibility needed for dynamic content.

Their implementation also includes intelligent cache invalidation strategies. Rather than using simple time-based expiration, vc.ru employs event-driven invalidation where specific cache entries are cleared only when the underlying content actually changes. This prevents unnecessary cache regeneration while ensuring users always receive the most current version of content. The system tracks content modifications and selectively invalidates only the affected cache entries, minimizing the performance impact of content updates while maintaining cache effectiveness.

Implementing Aggressive Caching Techniques

Aggressive caching represents vc.ru’s most powerful optimization technique, allowing them to serve content at speeds that seem almost instantaneous to users. The term “aggressive” here doesn’t mean reckless optimization—it refers to a comprehensive, multi-layered approach that maximizes cache hits while maintaining content integrity. vc.ru’s implementation demonstrates that aggressive caching, when done correctly, can dramatically improve performance without compromising user experience.

The foundation of vc.ru’s aggressive caching is multi-level caching architecture. They implement caching at every possible layer—from the browser to the application server to the database. This approach ensures that regardless of where a request originates, the system can serve cached content efficiently. Browser caches handle static assets, server caches manage frequently accessed data, and database caches optimize query results. The result is a cascading effect where each cache layer reduces the load on subsequent layers, creating a highly efficient system that can handle tremendous traffic with minimal resource consumption.

Cache warming is another critical technique in vc.ru’s arsenal. Instead of waiting for users to trigger cache generation, they proactively populate caches with frequently accessed content during off-peak hours. This preparation means that when traffic spikes occur—such as during breaking news events—most content is already cached and ready to serve. The system analyzes historical traffic patterns to predict which pages and assets will be most requested and ensures these are cached before they’re needed. This foresight allows vc.ru to maintain consistent performance even during unexpected traffic surges that would overwhelm less prepared systems.

vc.ru also implements smart cache compression to further reduce bandwidth usage and improve transfer speeds. They dynamically compress cached content based on the requesting browser’s capabilities, using the most efficient compression algorithm available. This approach ensures that modern browsers receive highly compressed content while older browsers still receive compatible, though less compressed, versions. The compression happens before content is cached, so subsequent requests benefit from both the reduced bandwidth and the computational savings of serving pre-compressed content.

Perhaps most importantly, vc.ru’s aggressive caching includes graceful degradation mechanisms. When cache servers experience high load or technical issues, the system automatically falls back to generating content dynamically rather than serving errors or degraded experiences. This fallback strategy ensures reliability while still providing the performance benefits of caching under normal conditions. The system constantly monitors cache health and automatically adjusts the percentage of traffic served from cache based on current conditions, maintaining optimal performance without compromising availability.

Browser Caching Best Practices

Browser caching forms a critical component of vc.ru’s optimization strategy, allowing them to leverage users’ local storage for dramatically improved repeat visit performance. The effectiveness of browser caching lies in its ability to eliminate redundant downloads—once a user has visited vc.ru, many assets can be served directly from their browser’s cache rather than being re-downloaded from the server. This approach not only speeds up page loading but also reduces bandwidth consumption for both users and the server.

vc.ru implements browser caching through strategic use of HTTP cache headers. They set appropriate Cache-Control headers that specify how long browsers should store different types of content. For static assets like CSS, JavaScript, and images that rarely change, they use long cache durations (often a year or more) with versioned filenames. This approach allows them to set aggressive cache times while still ensuring users receive updated content when files are modified—simply changing the filename invalidates the old cache entry. For more dynamic content, they use shorter cache durations or cache-revalidation strategies that allow browsers to check for updates without full downloads.

Cache busting represents another crucial technique in vc.ru’s browser caching strategy. They implement filename versioning and query string parameters to prevent browsers from using outdated cached versions when content updates occur. For example, instead of linking to styles.css, they might use styles.css?version=2.3.1. When they update the file, they simply increment the version number, ensuring browsers request the new file rather than using the old cached version. This technique prevents the frustrating experience of users seeing outdated content after a site has been updated.

vc.ru also employs conditional requests to optimize bandwidth usage. When browsers need to verify if cached content is still current, they send conditional requests using headers like If-Modified-Since or If-None-Match. vc.ru’s servers respond with a 304 Not Modified status when cached content is still valid, allowing browsers to use their cached versions without re-downloading the content. This approach dramatically reduces bandwidth usage while maintaining content freshness. The effectiveness of this strategy is evident in vc.ru’s ability to reduce requests from 91 to 11 per page load through proper browser caching implementation.

The final piece of vc.ru’s browser caching strategy is cache partitioning. They implement different caching strategies for different types of users—logged-in users versus anonymous visitors, mobile users versus desktop users. This approach allows them to serve personalized content efficiently while still leveraging browser caching benefits. For example, anonymous users might receive aggressively cached content, while logged-in users have more personalized elements cached based on their preferences and browsing history. This segmentation ensures that browser caching works effectively across all user segments without compromising personalization or functionality.

Server-Side Caching Implementation

While browser caching optimizes the user experience for repeat visits, server-side caching is what makes vc.ru perform exceptionally well for first-time visitors and during traffic spikes. Their server-side caching implementation represents a sophisticated approach that maximizes performance while maintaining content accuracy and system reliability. The key insight here is that server-side caching eliminates the most time-consuming parts of page generation before they even become bottlenecks.

vc.ru’s server-side caching architecture employs multiple cache tiers to handle different types of data at different levels of the system. At the application level, they implement object caching that stores frequently accessed data structures in memory. This approach is particularly valuable for database query results, API responses, and expensive computations that don’t need to be performed for every request. By serving this data from memory rather than regenerating it, vc.ru dramatically reduces processing time while maintaining the flexibility to update cached data when necessary.

Database caching forms another critical component of vc.ru’s server-side strategy. They implement query result caching that stores the results of expensive database queries in memory. When the same query is requested again, the system serves the cached results directly, bypassing the database entirely. This technique is especially valuable for complex joins, aggregations, and full-text searches that would otherwise consume significant database resources. vc.ru’s system intelligently identifies which queries benefit most from caching based on execution time and frequency, ensuring that only the most resource-intensive operations are cached.

vc.ru also implements page caching at the server level, storing complete rendered HTML pages. This approach eliminates the need to regenerate entire pages for each request, which is particularly valuable for content-heavy pages like article listings and category pages. Their page caching system includes intelligent invalidation strategies that clear cached pages only when the underlying content actually changes, rather than using simple time-based expiration. This approach maximizes cache hit rates while ensuring content freshness.

Perhaps most impressive is vc.ru’s distributed caching implementation using technologies like Redis and Memcached. These in-memory data stores allow them to share cached data across multiple servers, ensuring consistency and improving performance in clustered environments. When a request arrives, the system checks the distributed cache first before falling back to slower storage mechanisms. This approach allows vc.ru to scale horizontally while maintaining the performance benefits of caching, making their system capable of handling massive traffic without degradation.

Dynamic Content Caching Solutions

One of the most significant challenges in optimizing dynamic content like vc.ru is balancing performance with content freshness. Traditional caching approaches either cache everything (risking stale content) or cache nothing (sacrificing performance). vc.ru addresses this challenge with sophisticated dynamic content caching solutions that allow them to serve most content from cache while ensuring time-sensitive elements remain current.

The cornerstone of vc.ru’s dynamic content strategy is selective caching. They carefully analyze each page component to determine what can be cached and what needs real-time generation. For example, the main article content might be dynamic (updated frequently), while the sidebar widgets and navigation elements remain static across multiple requests. This granular approach maximizes the benefits of caching while maintaining the flexibility needed for dynamic content. vc.ru’s system automatically identifies which page components change frequently and adjusts caching strategies accordingly.

Fragment caching represents another key technique in vc.ru’s approach. Instead of caching entire pages or none at all, they break down pages into logical components and cache each component independently. This approach allows them to serve cached static components while generating dynamic content in real-time. For instance, a news article page might have the article body served dynamically (to ensure it’s always current) while the header, footer, and related articles are served from cache. This strategy dramatically reduces processing time while ensuring that the most critical content remains fresh.

vc.ru also implements edge-side caching for their most dynamic content. They use technologies like Varnish or nginx’s fastcgi_cache to cache content at the edge of their network, closer to users. This approach reduces the distance data travels while still allowing for dynamic content generation. The edge cache serves as a first line of defense, handling most requests without involving the origin servers. Only when content needs to be fresh or when the edge cache misses does the request reach the application servers, significantly reducing server load and improving response times.

Perhaps most innovative is vc.ru’s predictive caching system. Using machine learning algorithms, they analyze user behavior patterns to predict which content users are likely to request next. Based on these predictions, they proactively cache content before it’s requested, creating a seamless experience where content appears to load instantly. This approach is particularly valuable for content with sequential access patterns, like article series or comment threads. By anticipating user needs, vc.ru ensures that content is ready before users even know they want it, creating a remarkably fluid browsing experience.

Measuring and Monitoring Cache Performance Impact

Implementing caching strategies is only half the battle—measuring their effectiveness and continuously optimizing based on performance data is what makes vc.ru’s approach truly exceptional. Their comprehensive monitoring system allows them to track the impact of caching at every level of the system, identify bottlenecks, and make data-driven decisions about optimization strategies.

vc.ru’s monitoring framework focuses on key performance indicators (KPIs) that directly relate to user experience. They track metrics like Time to First Byte (TTFB), Largest Contentful Paint (LCP), and fully loaded time to understand how caching affects actual page rendering speed. According to WP Rocket’s performance analysis, proper caching implementation can reduce TTFB from 836ms to 630ms and fully loaded time from 4.1s to 2.1s. vc.ru continuously monitors these metrics to ensure their caching strategies continue to deliver optimal performance as traffic patterns evolve.

Cache hit rates represent another critical metric in vc.ru’s monitoring system. They track the percentage of requests served from cache versus those that require dynamic generation. High cache hit rates indicate that caching is effectively reducing server load and improving response times, while low hit rates suggest opportunities for optimization. vc.ru’s system analyzes hit rates at different levels—browser cache, server cache, and database cache—to identify which caching strategies are most effective and where improvements might be needed.

vc.ru also implements real-time performance monitoring that tracks how caching affects individual user experiences. They use technologies like New Relic and Datadog to monitor page generation times, database query performance, and API response times with and without caching. This granular monitoring allows them to identify exactly where caching provides the most benefit and where it might be causing issues. For example, they might discover that while page caching improves overall performance, it occasionally causes delays when cached content needs to be invalidated, prompting them to implement more sophisticated cache invalidation strategies.

Perhaps most importantly, vc.ru uses A/B testing to continuously refine their caching strategies. They test different cache configurations, cache durations, and cache invalidation strategies to determine which approach delivers the best performance while maintaining content freshness. This empirical approach allows them to move beyond theoretical best practices and implement strategies that deliver actual results in their specific environment. By constantly testing and measuring, vc.ru ensures that their caching strategies evolve alongside their content and traffic patterns, maintaining optimal performance as the platform grows and changes.

Sources

- WP Rocket Performance Optimization Guide — Comprehensive analysis of website caching techniques and performance impacts: https://wp-rocket.me/blog/optimize-website-loading-time/

- Core Web Vitals Optimization Best Practices — Google’s guidelines for measuring and improving user experience metrics: https://developers.google.com/web/fundamentals/performance/critical-rendering-path/

- HTTP Caching Explained - Mozilla Developer Network’s authoritative guide to browser and server-side caching: https://developer.mozilla.org/en-US/docs/Web/HTTP/Caching

- Redis Caching Strategies - Official documentation on implementing effective caching with Redis: https://redis.io/docs/latest/develop/use-caching/

- Varnish Cache Configuration Guide - Technical documentation for implementing edge-side caching: https://www.varnish-cache.org/docs/6.0/

- Nginx Caching Configuration - Official nginx documentation on implementing fastcgi_cache: https://nginx.org/en/docs/http/ngx_http_fastcgi_module.html#fastcgi_cache

Conclusion

vc.ru’s optimization success stems from a sophisticated, multi-layered approach to caching that addresses both static and dynamic content needs. By implementing aggressive caching strategies across browser, server, and database layers, they’ve achieved remarkable performance improvements—reducing TTFB from 836ms to 630ms and fully loaded time from 4.1s to 2.1s, even with similar network latency to competitors. Their selective caching, fragment caching, and predictive caching techniques allow them to serve most content from cache while ensuring time-sensitive elements remain current.

The key lesson from vc.ru’s approach is that effective caching isn’t just about storing more data—it’s about storing the right data in the right places with the right strategies. Their implementation demonstrates that with intelligent cache invalidation, adaptive caching parameters, and comprehensive monitoring, websites can achieve exceptional performance without compromising content freshness or functionality. For developers looking to optimize their own sites, vc.ru’s case study illustrates the importance of a nuanced approach that balances speed with accuracy, ensuring that caching enhances rather than detracts from the user experience.