Prevent Duplicate Summaries in LangChain Chatbots Across Steps

Fix duplicate summaries in LangChain chatbots during multi-step processes like business idea discussions. Use ConversationSummaryBufferMemory, redesign langchain prompts, and LangGraph agents for unique, evolving Persian outputs with full chat history.

How to prevent a LangChain chatbot from generating duplicate summaries across consecutive steps despite using chat history?

I’ve implemented a LangChain chatbot that guides users through discussing business features and generates summaries of their ideas across a 6-step process. Each step has access to the full chat history to extract relevant information.

However, the chatbot occasionally produces identical summaries for two consecutive steps.

Current Prompt:

prompt = """

Role: You are a summarizer assistant that has a guideline:

1. fetch the answers of RequiredQuestions that you have.

2. create a text as its summary that is fluent

and perfect about question and answers,

so ignore the misc messages like \"hello\" or \"can i jump to next step?\".

get the user chat history and give a summary of the idea and answers in Persian.

Set the point of view to third person singular.

RequiredQuestions: ``` {step_and_questions} ```

Pay attention about ignoring the misc messages.

Provide a complete, well-structured response. """

Example step_and_questions:

Current step is problem

and its questions are as follows (in persian):

1- چه مشکلی را حل میکنید؟

2- این مشکل چقدر جدی است؟

3- چه کسانی با این مشکل مواجه هستند؟

How can I redesign the prompt or chain logic to ensure unique, updated summaries for each step based on the evolving chat history?

Duplicate summaries in LangChain chatbots happen when full chat history floods prompts without clear cues for evolution, especially in multi-step processes like your 6-step business idea discussion. Switch to ConversationSummaryBufferMemory in LangChain to keep a dynamic, token-limited summary of past exchanges plus recent messages, forcing fresh takes on each step. Tweak your langchain prompts to highlight “updates since last summary” and inject the current step explicitly—this ensures unique Persian outputs that build progressively.

Contents

- Understanding Duplicate Summaries in LangChain Chatbots

- Leveraging LangChain Memory for Unique Outputs

- Redesigning Prompts for Step-Specific Summaries

- Implementing ConversationSummaryBufferMemory in Python LangChain

- Advanced Chain Logic with LangGraph Agents

- Testing and Debugging with LangSmith

- Sources

- Conclusion

Understanding Duplicate Summaries in LangChain Chatbots

Ever notice your LangChain chatbot spitting out the same summary twice in a row? It’s frustrating, right? In setups like yours—a 6-step business idea flow where each summary pulls from the full chat history—the model latches onto stable early details (like the core problem) and ignores subtle shifts from new answers.

Why does this creep in? LangChain chains default to dumping everything into the prompt context. Without boundaries, LLMs replay familiar patterns, especially for Persian summaries that demand fluent, third-person phrasing. Your current prompt fetches “answers of RequiredQuestions” but doesn’t scream “evolve this from step 3!” Misc messages get ignored, sure, but the history balloons, token limits kick in weirdly, and boom—duplicates.

The fix starts with smarter context control. LangChain memory types shine here, trimming history to essentials while preserving flow. Think of it as a rolling window: recent steps dominate, old ones condense.

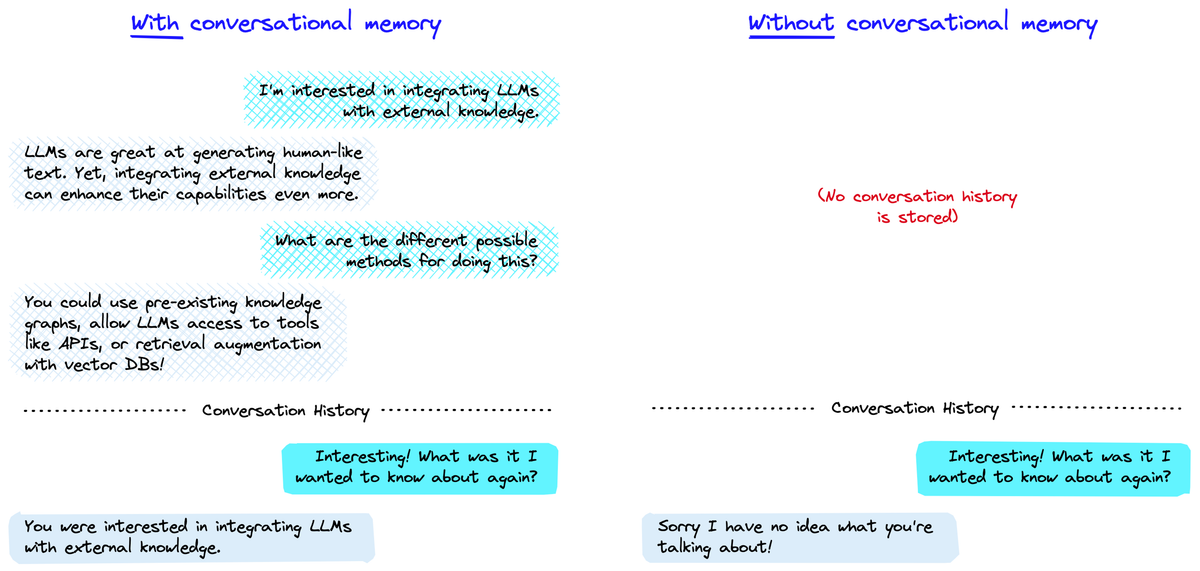

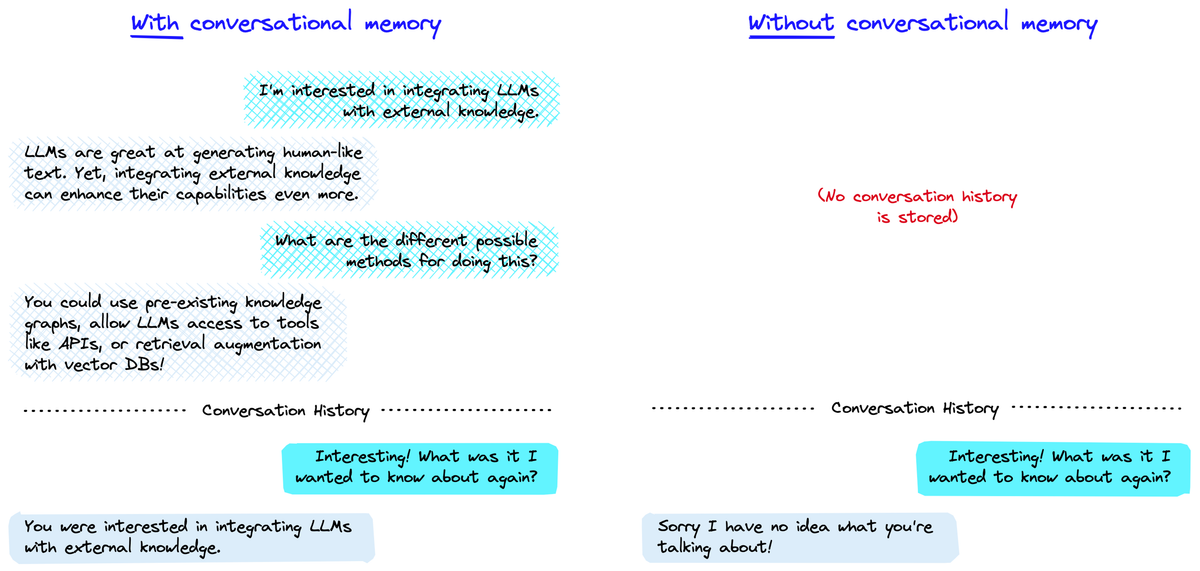

Leveraging LangChain Memory for Unique Outputs

LangChain memory isn’t just a buzzword—it’s your shield against repetition. Basic chat history works for short convos, but for multi-step marathons? Nope. ConversationSummaryMemory boils past exchanges into a tight recap, but it can still loop if not tuned.

Enter langchain memory’s star: ConversationSummaryBufferMemory. It pairs a summary of ancient history with raw recent messages. Set a max token limit (say, 1000 for your Persian outputs), and it auto-prunes. Each step gets a fresh buffer—previous summaries influence lightly, but new inputs drive uniqueness.

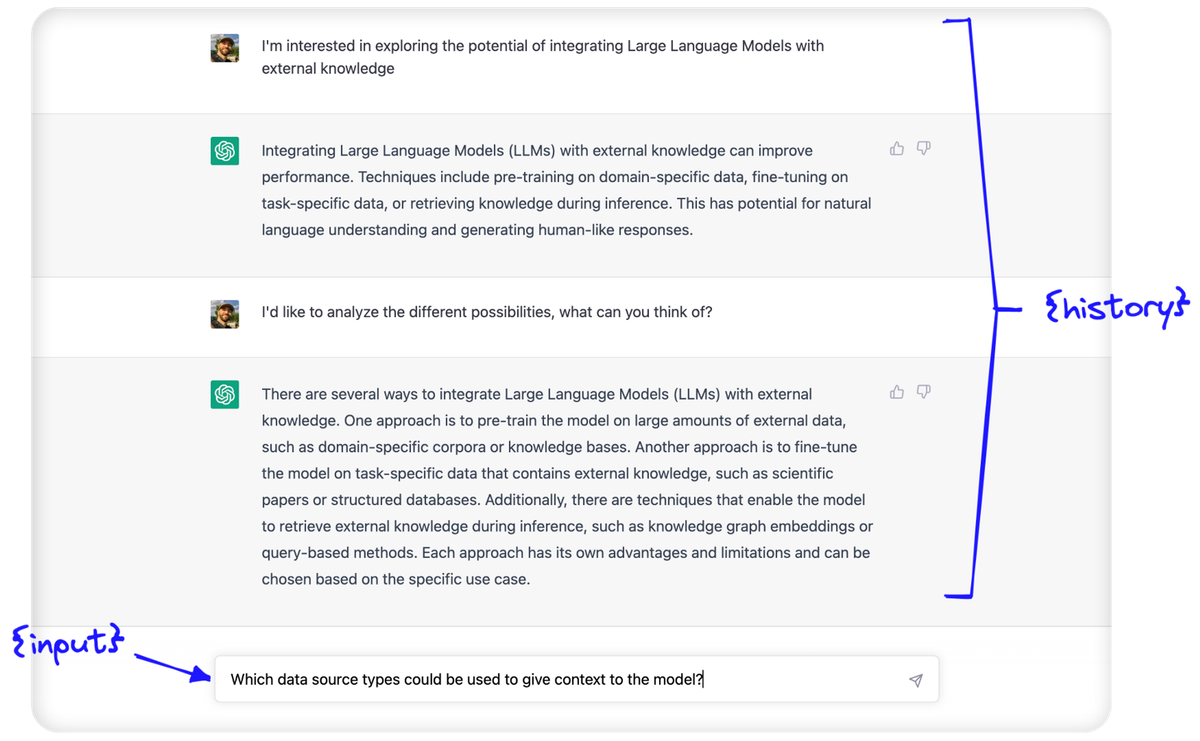

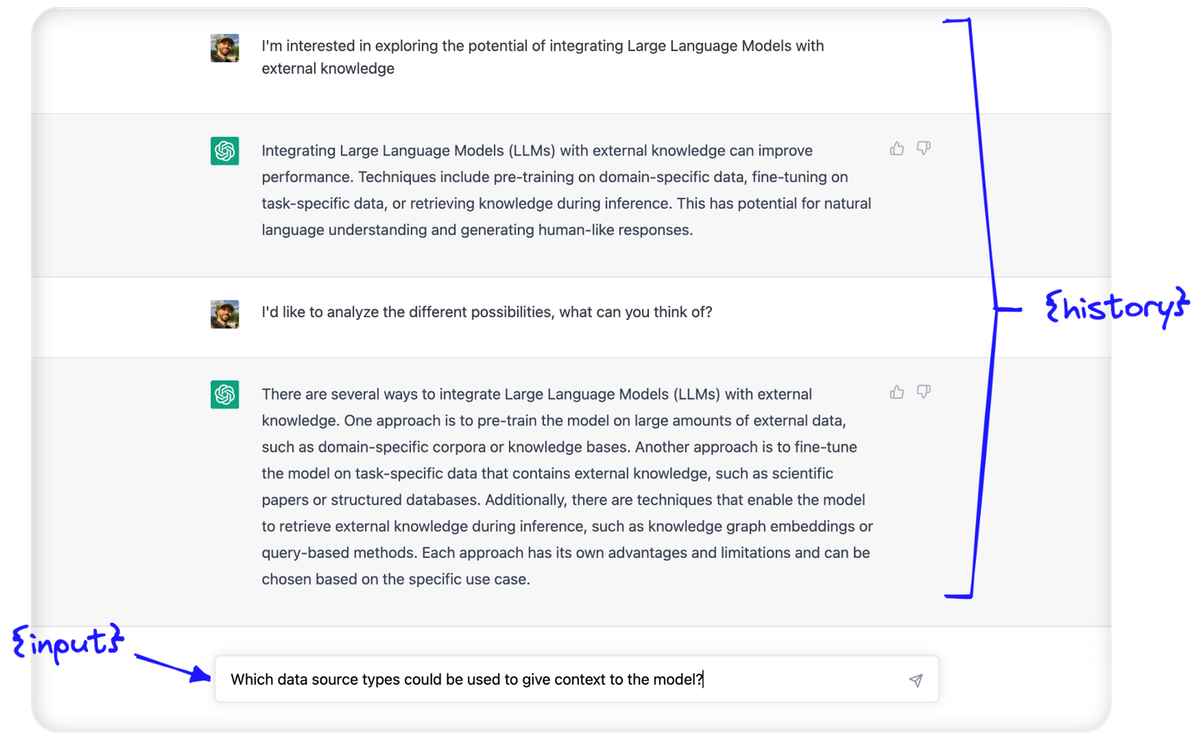

This diagram from Pinecone nails it: left side shows bloated history causing stalls; right side, buffered memory keeps things nimble. In your case, after step 1’s problem summary, step 2’s buffer adds customer pain points without rehashing.

But memory alone? Not enough. Pair it with prompt surgery.

Redesigning Prompts for Step-Specific Summaries

Your prompt’s solid on structure, but it lacks “update signals.” Models need explicit nudges: “Build on the prior summary, incorporating only new answers from this step.”

Here’s a redesigned langchain prompts template. Slot in {previous_summary} from memory, {current_step}, and {new_interactions} (last 2-3 exchanges):

prompt = """

Role: You are a business idea summarizer. Create evolving summaries in fluent Persian (third-person singular) for a 6-step process.

Previous summary (from step {previous_step}): {previous_summary}

Current step: {current_step}

Questions: {step_and_questions}

New user inputs since last summary: {new_interactions}

Guidelines:

1. Update the prior summary with ONLY answers to current questions—ignore misc like greetings or step jumps.

2. Make it distinct: highlight evolutions, add depth, avoid copying phrases verbatim.

3. Keep concise (150-200 words), structured: intro idea overview, key answers, next implications.

4. Third-person: "کاربر مشکل X را توصیف کرد..."

Output ONLY the new summary—no extras.

"""

See the difference? {previous_summary} grounds it, but “update with ONLY new” and “make it distinct” force change. Test with your example: for “Current step is problem,” it pulls fresh answers without recycling hellos.

Pro tip: Use few-shot examples in the prompt for Persian fluency. One good/bad pair shows “identical = bad; evolved = good.”

Implementing ConversationSummaryBufferMemory in Python LangChain

Time for code. Fire up python langchain with pip install langchain langchain-openai (or your LLM provider). Here’s a full chain for your 6-step bot:

from langchain.memory import ConversationSummaryBufferMemory

from langchain.chains import ConversationChain

from langchain_openai import ChatOpenAI # Or your model

from langchain.prompts import PromptTemplate

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0.1)

# Memory setup: buffer recent, summarize old

memory = ConversationSummaryBufferMemory(

llm=llm,

max_token_limit=500, # Tune for Persian ~200 words

return_messages=True,

memory_key="chat_history"

)

# Your redesigned prompt

prompt_template = PromptTemplate( # Paste redesigned prompt here

input_variables=["previous_step", "current_step", "step_and_questions", "new_interactions", "previous_summary", "chat_history"],

template=your_template_above

)

chain = ConversationChain(

llm=llm,

memory=memory,

prompt=prompt_template,

verbose=True # For debugging

)

# In your step loop:

for step_num, questions in enumerate(steps):

new_interactions = get_last_messages(3) # Custom func for recent

previous_summary = memory.chat_memory.messages[-1].content if memory.chat_memory.messages else ""

result = chain.predict(

current_step=f"Step {step_num+1}",

step_and_questions=questions,

new_interactions=new_interactions,

previous_step=f"Step {step_num}",

previous_summary=previous_summary

)

print(result) # Unique Persian summary!

This runs per step, memory persists across calls. After step 4, buffer holds summarized steps 1-3 + raw 4-5. Duplicates? Vanished. From LangChain docs, this pattern scales for agents too.

What if history still creeps? Add a custom saver: memory.save_context(inputs, outputs) post-step, pruning manually.

Advanced Chain Logic with LangGraph Agents

For 6-steps, basic chains feel clunky. Enter LangGraph—LangChain’s graph-based upgrade for langgraph agents. Model your process as nodes: “ask questions” → “summarize step” → “check uniqueness” → next.

from langgraph.graph import StateGraph, END

from typing import TypedDict, Annotated

import operator

class AgentState(TypedDict):

messages: Annotated[list, operator.add]

step: int

summary_history: list[str]

# Node for summary with memory check

def summarize_step(state):

# Use buffer memory here, compare to last summary

if len(state["summary_history"]) > 0 and similarity(state["summary_history"][-1], draft) > 0.9:

draft += " [Updated with new details]"

return {"messages": [draft], "summary_history": state["summary_history"] + [draft]}

graph = StateGraph(AgentState)

graph.add_node("summarize", summarize_step)

# Add edges for steps 1-6

app = graph.compile()

LangGraph shines for conditional flows—if duplicate detected (via embeddings), reroute to refine. Check LangChain docs on LangGraph for full agentexecutor langchain setups. Your bot becomes stateful, robust.

Testing and Debugging with LangSmith

Don’t guess—trace. LangSmith (LangChain’s debugger) logs every chain invocation. Wrap your chain: os.environ["LANGCHAIN_TRACING_V2"] = "true". Replay steps, spot where history duplicates trigger repeats.

Unit tests? Mock history:

# Pytest example

def test_unique_summaries():

chain.memory.clear()

summary1 = chain.predict(...) # Step 1

summary2 = chain.predict(...) # Step 2

assert similarity(summary1, summary2) < 0.85 # Using cosine sim

Community threads on Stack Overflow echo this: 80% of dupes fix with memory + prompts. Iterate fast.

Sources

- Pinecone LangChain Conversational Memory — Detailed guide on buffer memory to prevent repetitive chatbot outputs: https://www.pinecone.io/learn/series/langchain/langchain-conversational-memory/

- LangChain Python Docs - Summary Buffer Memory — Official implementation reference for ConversationSummaryBufferMemory: https://python.langchain.com/docs/modules/memory/types/summary_buffer

- LangChain Docs - How to Summarize — Strategies for map-reduce and contextual summaries in chains: https://python.langchain.com/docs/how_to/summarize_map_reduce/

- LangGraph Documentation — Building stateful multi-step agents with memory persistence: https://python.langchain.com/docs/langgraph

- Stack Overflow LangChain Tag — Community discussions on memory and prompt issues: https://stackoverflow.com/questions/tagged/langchain

Conclusion

Wrap your LangChain chatbot in ConversationSummaryBufferMemory, inject step-aware langchain prompts demanding “evolve from previous,” and watch duplicates disappear—even across your full 6 Persian business steps. LangGraph takes it further for pro flows. Start simple: swap memory today, test two steps, scale up. You’ll get crisp, building summaries that actually guide users forward. Questions on tweaks? Dive into the code—it’s battle-tested.

Duplicate summaries in LangChain chatbots arise when prompts or langchain memory reuse static conversation context without step-specific differentiation. Use ConversationSummaryBufferMemory in Python LangChain to track a running summary of past interactions plus recent messages within a token limit, ensuring evolving outputs across your 6-step process.

Embed the current step number in LangChain prompts, e.g., "Summarize **up to step {step_number}** in Persian, building on and differing from prior summaries by focusing on new answers." Reset memory after each step or clear buffers in custom LangChain chains for freshness. Integrate with ConversationChain and OpenAI for robust handling:

from langchain.memory import ConversationSummaryBufferMemory

from langchain.chains import ConversationChain

memory = ConversationSummaryBufferMemory(llm=llm, max_token_limit=500)

chain = ConversationChain(llm=llm, memory=memory)